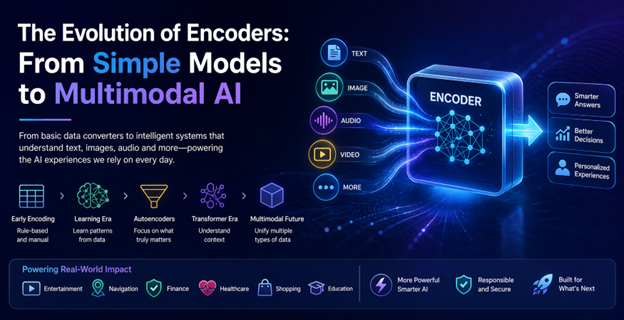

Understanding Encoders: The Foundation of AI Comprehension

Artificial intelligence often captures attention through its outputs—human-like text, vivid images, and precise recommendations. However, the crucial process of how AI interprets information often goes unnoticed. This interpretation begins with encoders, which act as translators that convert complex, unstructured real-world data into a structured format that machines can process effectively.

The Early Days: Encoding as a Basic Technical Step

Initially, encoding was a straightforward, manual process focused on converting labels or categories into numerical values. For example, early systems categorized items as “small,” “medium,” or “large” by assigning numbers to these terms. While functional, these encoders did not understand the meaning behind the data; they simply processed numbers without grasping context or relationships.

Such limitations meant early recommendation systems, like those in online stores, could not intuitively relate related products unless explicitly programmed to do so.

Transition to Learning: Neural Networks and Pattern Recognition

The introduction of neural networks revolutionized encoding by enabling systems to learn patterns directly from data rather than relying solely on human guidance. In image recognition, for instance, encoders no longer needed to be told what features defined an object; they learned to identify patterns such as shapes and textures from thousands of examples.

Similarly, in natural language processing, words evolved from simple symbols to mathematical vectors that capture semantic relationships. This innovation allows modern AI to understand that phrases like “cheap flights” and “budget airfare” are semantically similar, enhancing search engine accuracy and conversational AI capabilities.

Autoencoders: Identifying Essential Data Features

Autoencoders marked a significant advancement by introducing the concept of data compression and reconstruction. These models learn to compress input data into a smaller representation and then reconstruct it, effectively filtering out irrelevant information and focusing on key features.

Practical applications of autoencoders include fraud detection in banking, where the system learns typical transaction patterns and identifies anomalies, and image compression, where important visual details are preserved while reducing file sizes.

The Transformer Revolution: Grasping Context at Scale

The advent of transformer models transformed encoding by enabling AI to consider entire contexts simultaneously rather than processing data sequentially. This capability is vital for resolving ambiguities in language, such as interpreting the sentence “She saw the man with the telescope.” Transformers analyze the whole sentence to accurately determine who possesses the telescope.

Transformers underpin many popular AI tools, including chatbots, voice dictation, and translation services, making interactions with technology more intuitive and human-like.

Everyday Impact: Encoders in Daily Technology

Encoders are embedded in numerous everyday technologies. Streaming services use them to analyze viewing preferences, dynamically recommending content based on nuanced user behavior rather than broad categories. Navigation apps process real-time traffic and user data to optimize routes efficiently, often predicting congestion before it occurs.

In healthcare, encoders assist medical professionals by interpreting diagnostic images, highlighting areas of concern to support faster and more accurate decision-making.

Multimodal Encoders: Integrating Diverse Data Types

The latest evolution in encoding technology is multimodal encoders, which process various data types—such as text, images, and audio—simultaneously. This capability enables richer, more natural interactions with AI systems.

For example, a user can photograph a plant and ask for care instructions; the multimodal encoder analyzes the image alongside the query to provide an informed response. Similarly, in online shopping, users can upload product images to find visually and contextually similar items without typing descriptions.

This fusion of data types moves AI closer to how humans perceive and interpret the world.

Challenges Accompanying Encoder Advancements

Despite their power, advanced encoders demand significant computational resources, raising concerns about sustainability and accessibility. Additionally, because they learn from data, encoders may perpetuate biases present in their training datasets, such as unfair preferences in hiring algorithms.

Privacy is another critical issue, as encoders frequently handle sensitive personal information, necessitating robust data protection measures. Balancing innovation with ethical responsibility remains an ongoing challenge for AI development.

The Road Ahead: Refinement and Personalization

The future of encoders focuses on enhancing efficiency, reducing resource consumption, and bringing advanced AI capabilities within reach of smaller organizations and individual developers. Real-time personalization is expected to become more prevalent, with systems adapting dynamically to user behavior to offer tailored experiences.

Continued improvements in multimodal processing will enable even more seamless integration of different data formats, fostering interactions with AI that feel as natural as human conversation.

Conclusion: The Quiet Engine Driving AI Progress

Though encoders often operate behind the scenes, their evolution from simple numeric converters to complex, multimodal interpreters has fundamentally expanded AI’s capabilities. By meeting practical challenges—from language understanding to fraud detection—they have reshaped how machines interact with information and, ultimately, with humans.

As AI technologies advance, encoders will remain central to transforming raw data into insightful, actionable knowledge that powers intelligent systems worldwide.

Fonte: ver artigo original

AI Industry Invests $125 Million to Oppose Congressional Candidates Advocating for AI Regulation

AI Industry Invests $125 Million to Oppose Congressional Candidates Advocating for AI Regulation Anthropic Signals Need for New Subscription Models as Claude AI Usage Surges

Anthropic Signals Need for New Subscription Models as Claude AI Usage Surges Microsoft Launches Open-Source Toolkit to Enhance Runtime Security for AI Agents

Microsoft Launches Open-Source Toolkit to Enhance Runtime Security for AI Agents SAP and Fresenius Collaborate to Develop Sovereign AI Infrastructure for Healthcare

SAP and Fresenius Collaborate to Develop Sovereign AI Infrastructure for Healthcare