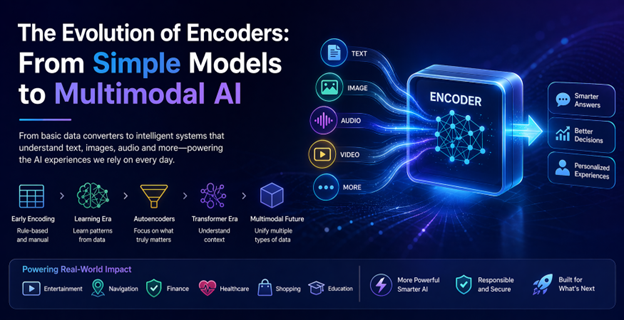

Understanding the Backbone of AI: What Are Encoders?

Artificial intelligence often captures public attention through its outputs—human-like conversations, vivid images, and accurate recommendations. However, the essential process of how AI comprehends input data starts with encoders. These components act as translators, converting complex, unstructured real-world information into an organized language that machines can process efficiently.

The Early Days: Encoding as a Basic Technical Step

Initially, encoding was a rudimentary process in machine learning. Developers manually mapped data categories, such as “small,” “medium,” and “large,” into numerical values for AI systems to process. Although functional, this approach lacked genuine understanding of data relationships, limiting AI’s ability to infer subtle connections. For instance, early recommendation engines could suggest items within predefined categories but failed to link related products like running shoes with fitness accessories unless explicitly programmed.

From Manual Rules to Learning Patterns

The introduction of neural networks marked a pivotal shift. Encoders evolved beyond simple converters into learning systems that identify patterns autonomously from vast datasets. In image recognition, instead of defining specific features like a cat’s whiskers or ears, AI models trained on thousands of images discerned these patterns independently, boosting accuracy and adaptability.

Similarly, language processing advanced as words were transformed into mathematical vectors encapsulating semantic meaning and relationships. This development enables modern search engines to recognize that terms like “cheap flights” and “budget airfare” are closely related despite differing wording.

Autoencoders: Extracting What Truly Matters

Autoencoders introduced a transformative concept: compressing data and reconstructing it to highlight essential features while discarding noise. This capability has practical applications such as fraud detection in banking, where the system learns typical transaction behavior and flags anomalies without explicit instructions. In everyday technology, autoencoders optimize photo storage by reducing file sizes while preserving critical image details for faster loading.

The Transformer Revolution: Embracing Context

The emergence of transformer models revolutionized encoding by enabling systems to process entire data sequences simultaneously rather than step-by-step. This contextual awareness resolves ambiguities—for example, understanding who holds the telescope in the sentence “She saw the man with the telescope.” Transformer-based encoders underpin many AI applications today, including chatbots, voice dictation, and real-time translation, making interactions more natural and fluid.

Encoders Embedded in Daily Life

Encoders subtly impact numerous aspects of everyday technology. Streaming services analyze viewing patterns to tailor recommendations beyond simple categories, learning nuanced user preferences over time. Navigation apps utilize encoders to process live traffic data and suggest optimized routes, sometimes anticipating congestion before it occurs. In healthcare, encoders assist medical professionals by analyzing diagnostic images and highlighting areas of concern, enhancing decision-making without replacing human expertise.

Multimodal Encoders: Integrating Diverse Data Types

The latest advancements have produced multimodal encoders capable of processing multiple data forms—such as text, images, and more—in parallel. This progress enables intuitive experiences like photographing a plant and querying care instructions or uploading an item’s image to find similar products online. By bridging different information types, multimodal encoders bring AI closer to human-like perception and understanding.

Challenges Accompanying Encoder Advances

While powerful, advanced encoders demand significant computational resources, raising concerns about environmental sustainability and equitable access. Bias presents another challenge, as models trained on skewed data risk perpetuating inequalities, highlighting the need for careful data curation and ongoing monitoring.

Privacy is also critical since encoders often handle sensitive personal information. Balancing innovation with responsible data protection remains a key focus area as AI technologies evolve.

Looking Ahead: Refinement and Personalization

Future developments aim to enhance encoder efficiency, making sophisticated AI accessible to smaller organizations and independent developers. Personalization is expected to grow, with encoders adapting in real time to individual user behaviors, potentially transforming education through tailored learning experiences.

Continued improvements in multimodal capabilities promise more seamless integration of diverse data, fostering interfaces that feel as natural as human interaction.

Conclusion: The Quiet Engine Driving AI Progress

Though often unnoticed, encoders are fundamental to AI’s evolution. Their journey from simple numerical mappings to intelligent, context-aware, and multimodal systems has significantly expanded AI’s potential. These advancements address practical problems, from language understanding and image recognition to fraud detection and personalized content delivery.

As artificial intelligence continues to advance, encoders will remain central, converting raw information into meaningful insights that enhance technology’s role in everyday life.

Fonte: ver artigo original

The Hidden Productivity Trap of ‘Tokenmaxxing’ in AI-Assisted Coding

The Hidden Productivity Trap of ‘Tokenmaxxing’ in AI-Assisted Coding Obvio Deploys AI-Powered Cameras at Stop Signs to Enhance Pedestrian Safety

Obvio Deploys AI-Powered Cameras at Stop Signs to Enhance Pedestrian Safety France Transitions from Windows to Linux to Reduce Dependence on US Technology

France Transitions from Windows to Linux to Reduce Dependence on US Technology Paris Blockchain Week 2026: Bridging Institutions and Digital Assets with a New Focus on Adoption and Compliance

Paris Blockchain Week 2026: Bridging Institutions and Digital Assets with a New Focus on Adoption and Compliance