AI Benchmarks Concentrate on Programming, Ignoring Broader Workforce

Recent research has uncovered a significant imbalance in the focus of artificial intelligence (AI) agent benchmarks. The study reveals that these benchmarks predominantly target coding-related tasks while largely overlooking other sectors of the labor market. This trend means that approximately 92% of US jobs are not represented in current AI performance evaluations.

Implications for AI Development and Workforce Integration

AI agents, which simulate human tasks to test and improve AI capabilities, have primarily been measured against their ability to perform programming and software development activities. While coding is a critical technical skill and an important field for automation, the narrow focus raises concerns about the applicability and relevance of AI tools designed for broader workplace use.

Ignoring the majority of the labor market limits the understanding of how AI can assist in varied professions such as healthcare, education, customer service, manufacturing, and many others. As AI tools become more integrated into daily work routines, it is crucial that their development benchmarks reflect the diversity of real-world job functions.

Why This Matters for Workers and Businesses

The study’s findings raise important questions about which jobs AI will impact first and how AI technology can be optimized to benefit a wider range of workers. While automation in coding jobs is progressing rapidly, employees in other sectors may not experience the same level of AI assistance or disruption because their roles are less represented in AI training and evaluation.

For businesses, the overemphasis on programming tasks in AI benchmarks might lead to missed opportunities to leverage AI for cost savings and productivity improvements in other departments. Expanding AI benchmarks to include a broader spectrum of job types could unlock new efficiencies and innovations across industries.

Next Steps for AI Research and Benchmarking

Experts suggest that future AI development should aim for more inclusive and comprehensive benchmarking that captures the complexity and variety of the workforce. This approach would provide a more accurate measure of AI’s potential impact, fostering tools that assist a wider range of professionals, from freelancers and small business owners to large enterprise employees.

Such an inclusive benchmarking strategy aligns with ongoing discussions about AI’s role in society, including ethical considerations, job displacement risks, and the creation of new employment opportunities.

Fonte: ver artigo original

Waymo Leverages Google DeepMind’s Genie 3 to Enhance Autonomous Driving Simulations

Waymo Leverages Google DeepMind’s Genie 3 to Enhance Autonomous Driving Simulations Tesla’s Annual Sales Decline 9% Amid Rising Competition from BYD and Loss of U.S. Tax Credit

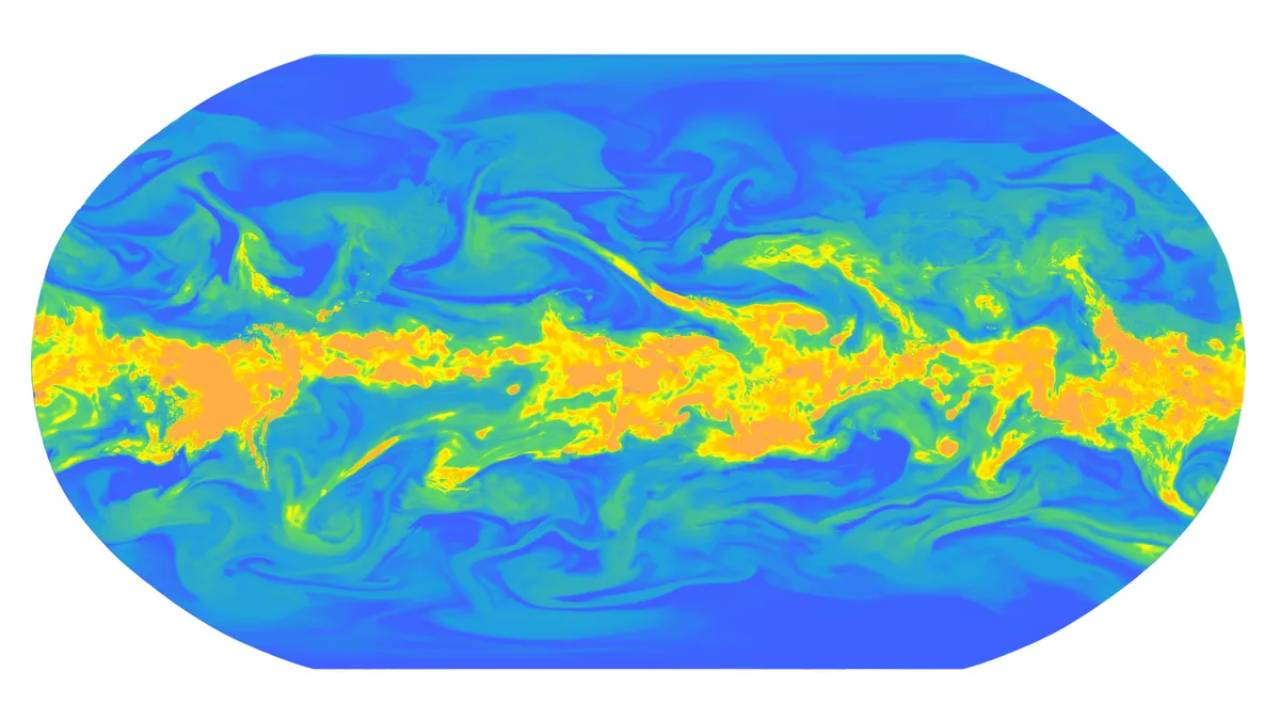

Tesla’s Annual Sales Decline 9% Amid Rising Competition from BYD and Loss of U.S. Tax Credit Google DeepMind Unveils WeatherNext 2: Revolutionizing AI-Based Weather Forecasting

Google DeepMind Unveils WeatherNext 2: Revolutionizing AI-Based Weather Forecasting NousCoder-14B: Open-Source AI Coding Model Challenges Big Tech in Software Development

NousCoder-14B: Open-Source AI Coding Model Challenges Big Tech in Software Development