Meta Advances Multimodal AI with SAM 3 Release

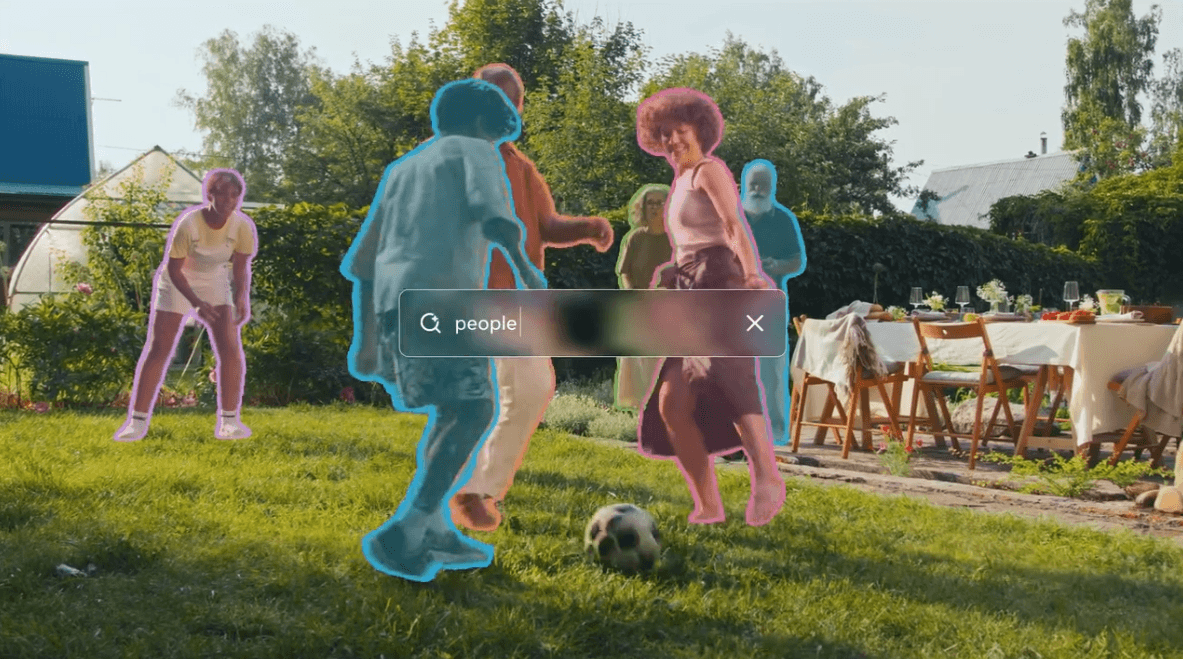

Meta Platforms, one of the leading technology companies in artificial intelligence research, has announced the release of its latest iteration of the Segment Anything Model, known as SAM 3. This third-generation model marks a significant step forward in combining language and visual data processing, pushing the boundaries of multimodal AI capabilities.

From Fixed Categories to Open Vocabulary

Traditional segmentation models typically operate within predefined categories, limiting their flexibility and adaptability to diverse real-world data. SAM 3 departs from this paradigm by employing an open vocabulary framework. This enables the model to interpret and segment both images and videos without being constrained to fixed labels, allowing for richer and more nuanced understanding of visual content.

Innovative Training Methodology

Central to SAM 3’s performance is a novel training approach that synergizes human expertise with artificial intelligence. The system leverages a collaborative annotation pipeline, where human annotators and AI algorithms jointly contribute to creating high-quality training data. This hybrid method enhances the model’s accuracy and generalization capabilities across varied visual domains.

Implications for AI Development and Applications

The release of SAM 3 aligns with broader industry trends emphasizing multimodal AI systems that integrate text, image, audio, and video inputs. Such advancements hold promise for numerous applications, including robotics, autonomous systems, content creation, and enhanced human-computer interaction.

Meta’s ongoing investment in open-source AI tools also contributes to the democratization of advanced machine learning resources, fostering innovation across the AI developer community.

Context within the AI Ecosystem

Meta’s SAM series reflects the competitive momentum among tech giants like OpenAI, Google DeepMind, and NVIDIA to develop sophisticated AI models that blur the lines between different data modalities. These efforts are critical as the industry moves towards more generalized AI systems capable of understanding and reasoning across multiple types of inputs.

Looking Forward

As AI models continue to evolve, the integration of language and vision remains a key challenge and opportunity. SAM 3’s open vocabulary and human-AI collaborative training represent promising directions for future research and commercial deployment, potentially influencing AI safety, alignment, and regulatory discussions given the expanding scope of AI capabilities.

Why OpenAI’s Mission and Business Model Are on a Collision Course: The Trust Dilemma in AI Power

Why OpenAI’s Mission and Business Model Are on a Collision Course: The Trust Dilemma in AI Power Snapchat Launches ‘Topic Chats’ to Boost Public Conversations on Trending Interests

Snapchat Launches ‘Topic Chats’ to Boost Public Conversations on Trending Interests Bosch Commits €2.9 Billion to AI to Transform Manufacturing and Supply Chains

Bosch Commits €2.9 Billion to AI to Transform Manufacturing and Supply Chains OpenAI Launches GPT Image 1.5 Model with Enhanced Image Generation Capabilities in ChatGPT

OpenAI Launches GPT Image 1.5 Model with Enhanced Image Generation Capabilities in ChatGPT