Nous Research, an open-source artificial intelligence startup backed by crypto venture capital firm Paradigm, has introduced NousCoder-14B, a new AI model designed for competitive programming tasks. Launched in April 2025, this model claims to match or surpass several larger proprietary coding systems despite being trained in only four days using 48 of Nvidia’s latest B200 GPUs.

The release comes amid heightened interest in AI coding assistants, highlighted by the recent popularity of Claude Code from Anthropic, which has attracted widespread developer attention on social media for its end-to-end software development capabilities.

How NousCoder-14B Stands Out in AI Coding

NousCoder-14B achieved a 67.87% accuracy rate on the LiveCodeBench v6 benchmark, which evaluates models on competitive programming problems published from August 2024 to May 2025. This represents a 7.08 percentage point improvement over its base model, Alibaba’s Qwen3-14B, according to Nous Research’s technical report.

The model’s developer, Joe Li, a former competitive programmer and researcher at Nous Research, highlighted an intriguing comparison between the model’s rapid improvement and his own developmental trajectory on Codeforces, a competitive programming platform. While Li’s personal skill improvement from novice to advanced took nearly two years with around 1,000 problems solved, NousCoder-14B achieved a similar performance leap within four days after training on 24,000 problems.

Radical Openness: Reproducibility and Transparency

What distinguishes NousCoder-14B from many proprietary AI models is its commitment to openness. Nous Research has publicly released not only the model weights but also the complete reinforcement learning environment, benchmark suite, and training framework through their Atropos framework. This transparency allows other researchers with sufficient resources to reproduce or extend the model’s capabilities.

Such openness is significant for the AI research community, providing infrastructure for reproducible, olympiad-level reasoning research and encouraging collaborative advancements.

Advanced Reinforcement Learning Techniques

NousCoder-14B employs a reinforcement learning approach based on verifiable rewards, where generated code is automatically tested against extensive test cases, providing binary feedback (correct or incorrect). This system required robust infrastructure, utilizing the cloud platform Modal for sandboxed parallel code execution with strict time and memory limits.

The training leveraged Dynamic Sampling Policy Optimization (DAPO), which dynamically discards training examples where the model either consistently succeeds or fails, optimizing learning efficiency. Additionally, the model underwent iterative context window expansion, starting with 32,000 tokens and ultimately evaluating with approximately 80,000 tokens to maximize accuracy.

Optimization techniques such as overlapping inference and verification, plus asynchronous training across multiple model instances, maximized GPU cluster utilization and training speed.

Data Scarcity Challenges in AI Coding

Joe Li’s technical report highlights an emerging challenge: the scarcity of high-quality, verifiable competitive programming data. The 24,000 problems used for training represent a substantial portion of all publicly available, standardized competitive programming problems, indicating that further progress will require innovative approaches beyond data scale expansion.

Unlike natural language tasks, where metrics can be more subjective, programming requires precise verification of correctness, making synthetic data generation notably difficult. Li proposes an intriguing future direction involving models that not only solve but also generate new solvable problems, enabling self-play training methodologies akin to those used in game-playing AI.

Strategic Investment and Open-Source Commitment

Nous Research has secured $65 million in funding, including a $50 million round led by Paradigm, signaling strong investor confidence in decentralized and open-source AI training approaches. This funding supports ongoing development of the company’s Psyche platform and prior model releases like Hermes 4, which reportedly outperform ChatGPT without content restrictions.

Despite some skepticism in the community regarding the company’s anime-inspired branding and benchmarking claims, Nous Research’s open-source strategy and technical achievements have established it as a notable player challenging Big Tech’s dominance in AI coding assistance.

Future Directions for AI Coding Models

Looking ahead, Nous Research identifies key areas to enhance AI coding tools, including:

- Multi-turn reinforcement learning to incorporate intermediate feedback such as compilation errors and time limit violations, which could improve iterative problem-solving.

- Better control over response length, addressing issues where incorrect solutions tend to be excessively long and strain model context windows.

- Problem generation and self-play techniques to overcome data limitations by enabling models to create their own training problems, fostering continuous learning.

NousCoder-14B is available now on Hugging Face under an Apache 2.0 license, along with the complete Atropos training stack for developers and researchers interested in further exploration.

As AI models rapidly close the gap with human programmers in competitive coding, the question evolves from whether machines can learn to code, to whether they can become superior educators and innovators in software development.

Fonte: ver artigo original

OpenAI Labels AI Expert Stuart Russell a ‘Doomer’ in Court Despite CEO’s Past Warnings

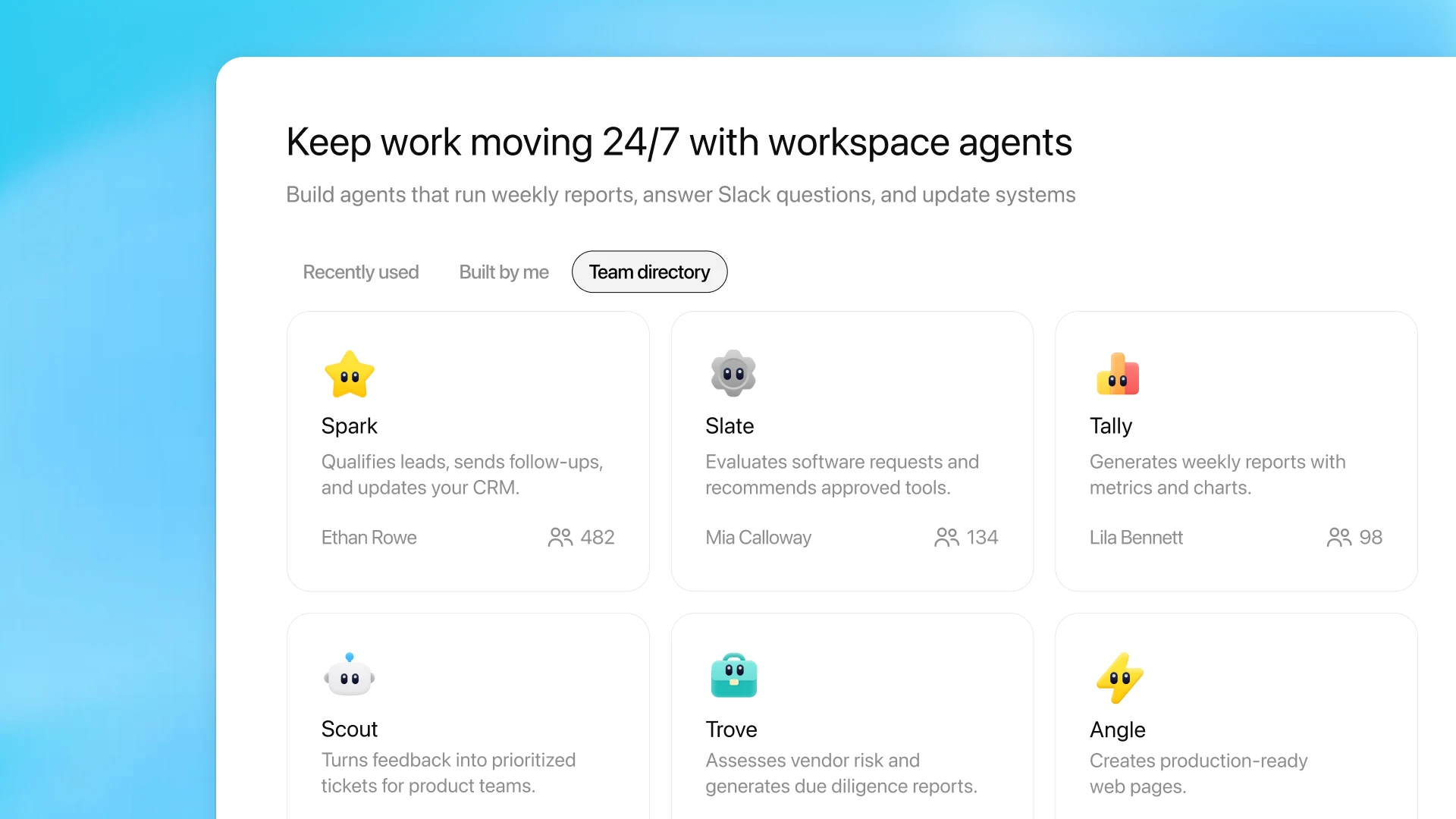

OpenAI Labels AI Expert Stuart Russell a ‘Doomer’ in Court Despite CEO’s Past Warnings OpenAI Introduces Workspace Agents to Transform ChatGPT into a Team Automation Platform

OpenAI Introduces Workspace Agents to Transform ChatGPT into a Team Automation Platform Infosys Unveils Comprehensive AI Implementation Framework to Guide Business Leaders

Infosys Unveils Comprehensive AI Implementation Framework to Guide Business Leaders Former Harvard Dropouts Plan Launch of AI-Powered Smart Glasses with Continuous Audio Recording

Former Harvard Dropouts Plan Launch of AI-Powered Smart Glasses with Continuous Audio Recording